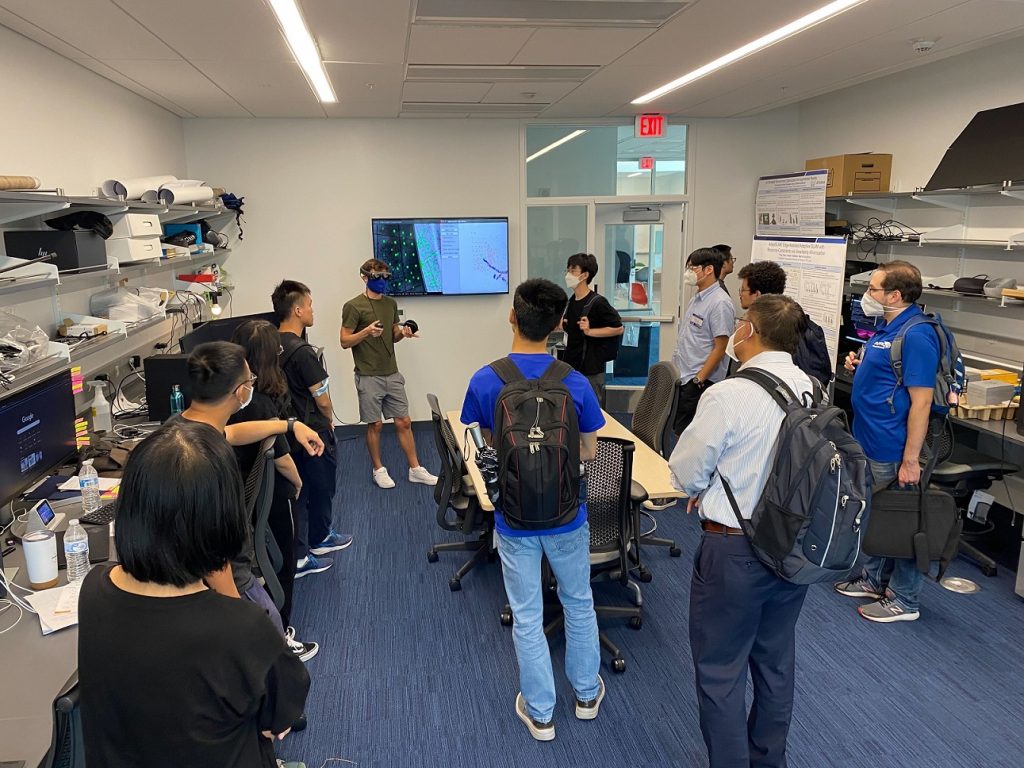

It was our sincere pleasure to offer a Duke I3T Lab Tour as part of the National Academy of Engineers Regional Meeting held at Duke University in May 2026.

May 2026 NAE Regional Meeting attendees exploring live AR and VR demonstrations as part of the Duke University Pratt School of Engineering lab tour

Just like the Frontiers of Engineering (FOE) NAE Symposium I attended back in 2021, the Regional Meeting was a real treat: it outlined both the promise and the challenges in the exciting and important space of commercial aviation, from multiple technical and non-technical perspectives. Like the FOE Symposium, it reminded me how exciting it is to be an engineer: to have not only an in-depth understanding of the problems and the underlying processes, but also know how to solve them. Thankful for the organizers for the opportunity to participate in this event and to contribute to it.